Don’t let AI adoption outpace security. Establish an AI Council, vet vendors, and enforce policies to keep AI under control. Discover the framework top enterprises use.

AI Integration Security: Why the Biggest Risk Is Not the Model

When people talk about AI security risks, the conversation usually starts with the model. Can it be jailbroken? Can someone get around the guardrails? Can an attacker make it say or do something it should not?

Those are fair questions, but they are not the most important ones.

The bigger risk is not the model on its own: it’s everything the model is connected to.

That is where the AI integration security layer comes in. The moment an assistant stops being just a chatbot and starts interacting with real systems, the risk changes. It is no longer only generating text. It is operating inside workflows, touching data, and inheriting access across tools and environments. That is where privilege starts to stack up faster than most AI governance can keep up with.

The misplaced focus of AI integration security

Jailbreaks still dominate much of the conversation around AI security. They are easy to understand, easy to share, and undeniably compelling. They make for strong demos, headlines, and conference talks.

But a bypass on its own is not always the disaster it is made out to be.

A model producing a manipulated or unsafe response is one thing. A model doing so while embedded in a live workflow is something else entirely. The risk changes when the system is no longer just answering prompts but influencing decisions, surfacing sensitive information, or triggering downstream actions. OWASP’s current guidance on prompt injection and AI agents makes exactly this point: the danger grows when models are connected to tools, APIs, and sensitive data because the outcomes can lead to unauthorized actions and data exfiltration.

That is the distinction many people still blur.

The real danger is not simply that a model can be tricked. It is that a tricked model may already be connected to the systems that matter most. What happens when that model has access to email, messaging platforms, or proprietary documents and data? Researchers have long documented how attackers abuse trusted business communications, impersonate executives, and use social engineering to gain access or influence decisions. In an AI-enabled environment, that kind of abuse can become even more efficient if a compromised assistant is already sitting in the middle of key workflows.

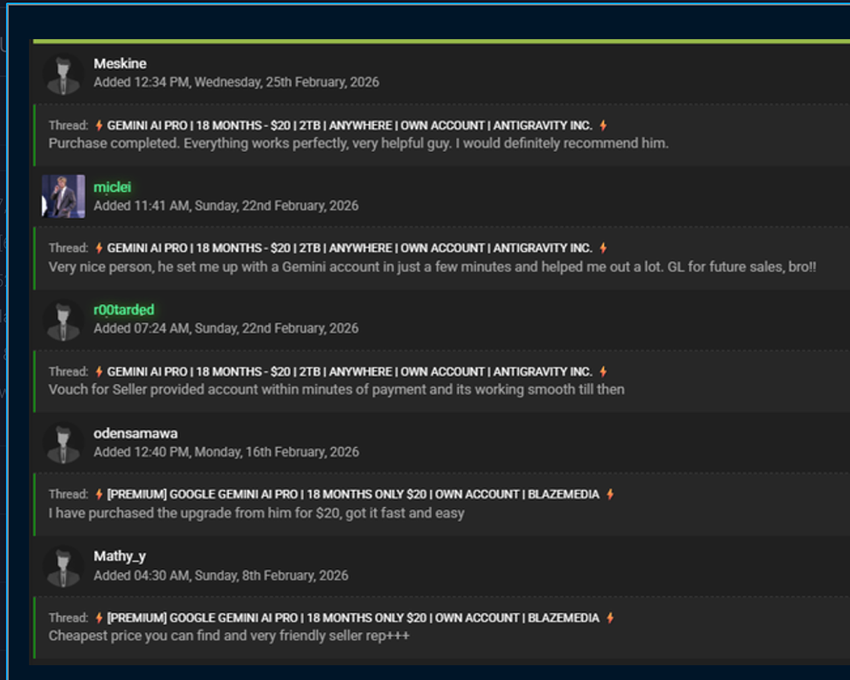

Over the last year, Bitsight Threat Intelligence researchers have observed continued discussion across the deep and dark web around AI access, jailbreaks, credentials, and the broader security implications of enterprise AI adoption. Underground activity also shows that valid credentials and access remain highly valuable to threat actors, which is exactly why connected AI systems deserve more scrutiny.

The privilege amplification problem

The breadth of connectivity is what makes AI assistants so valuable, including from SIEMs, messaging platforms, ticketing systems, cloud APIs, and internal knowledge bases. It’s also what makes them risky.

Once an AI assistant is connected across those systems, it is no longer just generating content. It is inheriting access, context, and permissions from every environment it touches. In many ways, it becomes a new control layer across the enterprise.

And if that layer is compromised, events can escalate quickly.

An attacker may not need to breach each system individually. They may only need to manipulate the assistant sitting at the center. From there, the consequences can become serious fast: workflow manipulation, data exfiltration, unauthorized actions, automation abuse, or subtle interference with the tools security teams depend on to investigate and respond. OWASP explicitly warns that prompt injections in connected systems can lead to unauthorized actions through tools and APIs. In some cases, widespread damage can stem from the compromise of a single AI-enabled security tool, as seen recently with OpenClaw.

That is where the asymmetry becomes clear. A single compromised integration layer can consolidate access that was once distributed across multiple tools, teams, and approval points.

The integration attack surface

The risk does not start and stop with the assistant interface. It lives in everything behind it.

That includes API keys sitting in configuration files, OAuth tokens with broad access, and service accounts that have quietly accumulated more privilege than anyone intended. In plenty of environments, old accounts stay active long after someone leaves the company. Temporary permissions become permanent because no one circles back to remove them. People get busy and forget. It's easy to do, and a common risk that can end in disaster.

Weak authentication makes it worse. Exposed dashboards, reused passwords, and simple credentials can all create openings into systems that were never meant to support this kind of AI-driven access.

Then there are shadow deployments. Teams move fast, spin up tools outside formal review, and connect systems before anyone has really thought through the implications. Every new integration expands the blast radius beyond the model itself.

This is the part of the AI security conversation that deserves much more attention. In many cases, the real exposure has less to do with what the model can say and more to do with what the surrounding architecture quietly allows it to touch.

The real threat model shift

A traditional enterprise attack usually follows a familiar path. An attacker compromises one system, moves laterally, escalates privileges, accesses sensitive data, and then turns that access into disruption, theft, or extortion.

AI-integrated environments can shrink that path.

If an attacker compromises the assistant, they may inherit access to everything the assistant can reach. The integrations have already done the hard work of connecting systems and aggregating permissions. What used to take several steps of lateral movement can suddenly become one.

That is the real shift in the threat model.

The assistant becomes a shortcut through the environment. Instead of moving system by system, the attacker moves through the connective layer that already ties everything together. The same efficiency that makes these tools powerful for defenders can also make them efficient for attackers.

Attackers get an AI boost too

It is easy to talk about AI as a force multiplier for defenders. In many ways, it is. These tools can help teams move faster, connect information across systems, and automate parts of the security workflow that used to take much longer.

But defenders are not the only ones getting that advantage.

Attackers can use AI to accelerate reconnaissance, process large amounts of technical information, identify exposed services, analyze integration patterns, and understand how AI-enabled tools are connected into enterprise environments. That makes it easier to find weak points in the systems surrounding the model, especially when those systems have broad permissions and limited oversight.

This is what makes the integration layer so important. The more connected the assistant is, the more valuable it becomes not just to defenders, but to attackers looking for a shortcut into the environment.

We are continuing to see conversations where actors offer access to different AI servers, jailbreaking capabilities, and credentials to those accounts.

The governance gap

This is why governance matters so much, and why so many organizations are still behind.

AI adoption is moving quickly. In a lot of environments, the culture is still basically to connect it and see what happens. Permission scopes do not get reviewed closely enough. Integration decisions happen in silos, while the risk builds across the organization.

Fixing things one model bypass at a time is not going to solve that.

What organizations really need is tighter access minimization, better visibility into what AI tools are deployed, formal approval processes for integrations, and ongoing permission reviews. They need to know which tools are connected, what those tools can access, and whether that access still makes sense.

Without that kind of governance, AI adoption will keep moving faster than security oversight.

The question security leaders should be asking

The key question is not just, “Can this model be jailbroken?”

It is, “What can this system do if it is?”

That is what determines blast radius.

If your AI security tool is connected to the systems you rely on every day, it may have visibility into code, alerts, internal conversations, incident workflows, cloud infrastructure, and sensitive business context. If that layer is manipulated or compromised, the problem stops being theoretical very quickly. It becomes an enterprise control issue.

Imagine your security tool has access to almost everything you touch at work. Now imagine it gets hacked.

What happens next?

What workflows could be changed? What data could be pulled? What actions could be triggered? How quickly would you know? And just as important, how would you contain it?

Those are the questions that should shape the next phase of AI security.

How Bitsight can help

If the integration layer is where AI risk starts to compound, organizations need more than model protections. They need visibility into the wider environment around those deployments, including exposed assets, third-party dependencies, critical vulnerabilities, shadow relationships, and emerging threats.

That is where Bitsight can play an important role.

The Bitsight Cyber Risk Intelligence Platform helps organizations understand their extended attack surface, exposures, and threats in real time, along with the business context needed to prioritize action.

That matters here because integration-layer risk is ultimately a visibility and prioritization problem. Security teams need to understand where connected systems may be introducing risk, where hidden or exposed assets may exist, and where third-party dependencies increase potential blast radius. Bitsight’s Security Posture Management offering is positioned around discovering assets, analyzing exposure, and helping teams prioritize vulnerabilities across their own environment and third-party ecosystem.

The challenge is also ongoing, not one-time. Bitsight’s Continuous Monitoring approach is designed to help organizations keep track of changes in security posture across their own environment and their third-party relationships, including alerts and vendor discovery that can help uncover both known and shadow IT connections.

That is especially relevant in AI-enabled environments, where integrations often stretch well beyond internal systems and into outside platforms, vendors, and service providers. Bitsight’s Third-Party Risk Management capabilities are positioned around helping organizations assess, validate, and continuously monitor that broader digital supply chain.

Bitsight Cyber Threat Intelligence enables teams to connect emerging threats, TTPs, and indicators to an organization’s specific attack surface so teams can make better prioritization decisions. As AI integrations increase the number of connected systems and shorten the window to identify real exposure, that kind of context becomes even more important.

While these don’t replace internal governance, access controls, or formal approval processes, Bitsight can still help organizations improve visibility, monitoring, and risk prioritization across the external and third-party ecosystem that makes integration-layer risk so significant.

To learn more, talk to our team.