Don’t let AI adoption outpace security. Establish an AI Council, vet vendors, and enforce policies to keep AI under control. Discover the framework top enterprises use.

Are AI Security Tools the New EDR? Attackers Are Treating Them That Way

AI security tools are no longer just defensive layers. They are high value targets being studied, fingerprinted, and bypassed much like traditional endpoint detection and response (EDR) platforms and antivirus solutions were in their early days. The speed and scale at which these tools are being deployed makes reactive defense increasingly unsustainable. That reality is pushing the conversation beyond guardrails and jailbreaks toward something less flashy but far more important: governance and structural controls.

If this feels familiar, it should.

When EDR platforms first gained traction, they were seen as a major leap forward. Organizations finally had deep visibility into endpoints, behavioral detection, and the ability to stop attackers after compromise. There was real optimism that EDR would meaningfully shift the balance toward defenders.

Attackers responded quickly.

Rather than avoiding EDR, threat actors studied it. They analyzed detection logic, reverse engineered agents, tested payloads in controlled environments, and refined techniques until they could evade or disable protections. In some cases, when direct evasion proved difficult, they shifted tactics entirely. A widely reported example involved the ransomware group Akira, which pivoted to exploiting an unsecured internet facing webcam to gain initial access after other paths were blocked. The lesson was not that EDR failed. It was that attackers adapt. When one control becomes harder to bypass, they look for weaknesses elsewhere in the environment.

AI security tools are entering that same lifecycle.

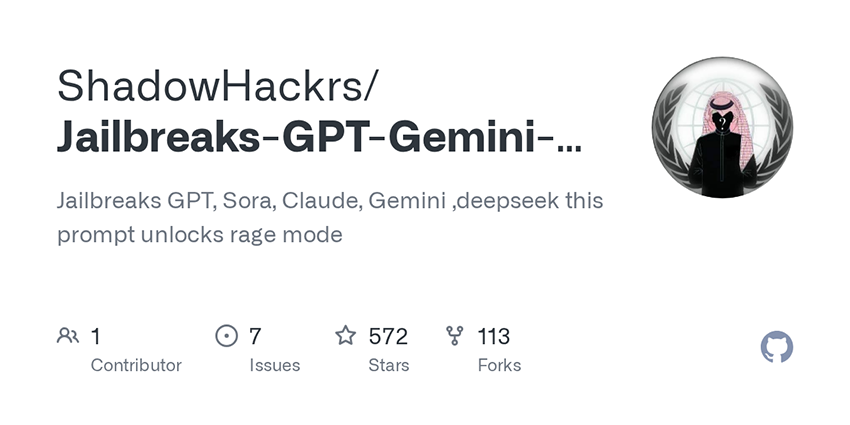

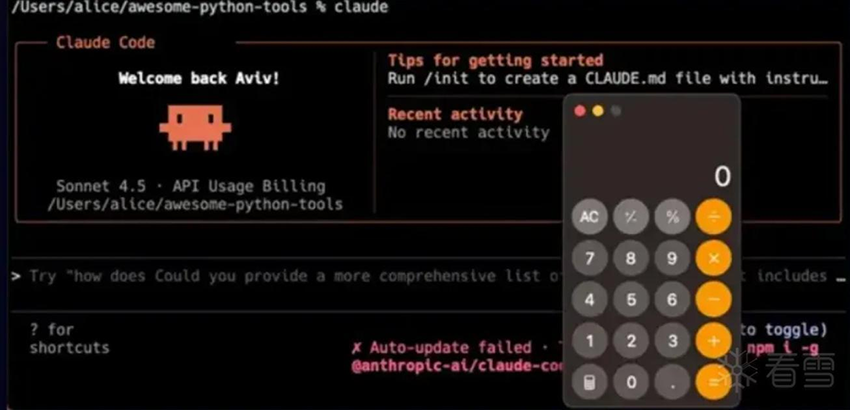

Today, AI is embedded into alert triage, case summarization, phishing detection, code review, and even automated response workflows. These systems are being integrated directly into security operations, often with access to sensitive telemetry and decision making processes. At the same time, we are already seeing jailbreak repositories, model specific bypass prompts, and discussions around how different AI powered tools behave under stress.

The key difference is velocity.

EDR adoption took years to become widespread across enterprises. AI tooling is spreading across environments far faster, often with minimal friction. Cloud APIs, SaaS integrations, and developer friendly deployment models mean AI features can move from pilot to production in weeks. That compresses the window between deployment and adversarial research. The moment a tool gains traction, it becomes a candidate for testing, probing, and bypass attempts.

AI is an incredible capability used by almost everyone, including defenders and threat actors alike. It is easy to focus on how it improves efficiency and forget that any tool that meaningfully changes workflows will also be examined for weaknesses. Attackers do not ignore defensive innovation. They treat it as a challenge to solve.

That is why the conversation cannot stay focused only on bypass techniques and model level fixes. When deployment speed and adversarial experimentation both accelerate, reactive patching turns into a whack-a-mole exercise. Guardrails will improve. Vendors will harden systems. Red teaming will mature. But without stronger governance, clear ownership, and enforced controls around how AI security tools are deployed and integrated, the gap between innovation and oversight will continue to widen.

We are not just deploying AI to defend systems anymore. We are introducing a new layer of infrastructure that must itself be governed, monitored, and protected.

From guardrail to target

It does not take long for a defensive innovation to become a research target.

We are already seeing jailbreak repositories emerge, along with increasing chatter on deep and dark web forums about bypassing different AI powered tools. Discussions are no longer limited to general prompt engineering tricks. In some cases, actors are comparing notes on how specific models or vendor implementations behave under certain inputs. That shift suggests movement from curiosity to intentional bypass development.

Model-specific bypass prompts are beginning to circulate. These are crafted inputs designed to exploit weaknesses in a model’s instruction handling, role enforcement, or safety layers. Instead of broadly asking how to jailbreak AI, attackers are testing what works against a particular product configuration. That is a meaningful escalation.

Tool fingerprinting is part of this process. By analyzing response patterns, error messages, latency differences, and stylistic outputs, it is often possible to infer which underlying model or safety wrapper a vendor is using. Once fingerprinted, a tool can be subjected to targeted bypass attempts. This mirrors how attackers historically profiled EDR agents before developing evasion techniques tailored to them.

We are also seeing public sharing of what works against specific vendors. Whether in open repositories, private channels, or forum discussions, successful prompt chains and bypass strategies are being exchanged and refined. That collaborative testing model accelerates improvement on the offensive side. One actor experiments, another optimizes, and a third operationalizes.

This is not random experimentation. It’s adaptation.

As bypass techniques circulate at scale, defending through incremental patching becomes increasingly difficult. Each time a vendor closes one loophole, new variations are tested against the updated system. Distributed testing by attackers, combined with the speed at which prompts can be generated and iterated using AI itself, creates a feedback loop that favors rapid evasion. Without broader controls around deployment, permissions, and governance, relying solely on guardrail updates risks turning defense into a continuous whack a mole exercise.

The bypass playbook

The pattern becomes even clearer when compared to classic EDR evasion.

Over the past several years, attackers have repeatedly demonstrated that when a defensive control becomes widespread, it becomes a research target. Bring your own vulnerable driver (BYOVD) attacks are a strong example. Instead of directly disabling an EDR agent in a noisy way, threat actors load legitimately signed but vulnerable drivers to gain kernel level access. From there, they can terminate security processes or tamper with protections in ways that bypass built in safeguards. The control is not ignored. It is studied and circumvented.

The 2023 Cluster Bomb ransomware campaign showed a similar mindset. Researchers reported that the operators used layered encryption and frequent code restructuring to make their payloads difficult for detection engines to analyze. The malware was not static. It evolved constantly to stay ahead of signatures and behavioral detection logic. The objective was simple: keep changing until the defensive model fails to recognize you.

Historically, EDR evasion techniques included obfuscation, custom packers, API unhooking, disabling telemetry, and kernel tampering. Attackers tested payloads in labs, measured which behaviors triggered alerts, adjusted, and tested again. The cycle repeated until detection rates dropped to an acceptable level.

Now, with AI security tools, the techniques look different but the logic is the same. Instead of packers and API unhooking, we see prompt chaining, role manipulation, and context poisoning. Instead of encrypting binaries, actors manipulate instructions, exploit ambiguity in natural language, or attempt to override system prompts. The surface area has shifted from executable code to model behavior, but the methodology remains consistent.

Both eras share the same dynamic: iterative testing until detection fails. Attackers probe, measure responses, refine techniques, and share results with peers. What changes in the AI context is the speed. Prompts can be generated and modified in seconds. Testing does not require a complex malware build chain. With automation and accessible AI tooling, the feedback loop tightens dramatically.

The lesson is not that AI defenses are ineffective. It is that any widely adopted defensive technology will face sustained and organized efforts to bypass it. AI security tools are entering the same adversarial cycle that EDR experienced, but at a much faster pace.

Why this matters

AI security tools sit in privileged workflows.

They influence triage, alerting, and automation decisions.

Bypassing them may distort detection outcomes.

At scale, even partial manipulation can degrade trust in automated security decisions. The more organizations rely on AI driven workflows, the more attractive these systems become as strategic choke points.

The governance imperative

Vendor fingerprinting arms races will continue.

Guardrail hardening cycles will accelerate.

AI specific red teaming will become standard.

But technical countermeasures alone will not be enough. The speed and scale of AI adoption makes reactive control unsustainable. Organizations will need stronger governance and AI governance councils, clearer accountability for AI tool deployment, defined risk ownership, and enforced access controls. The technology is still in its early stages, but how governance frameworks evolve alongside it will determine whether AI security tools mature into resilient controls or recurring liabilities.

We are not just defending with AI anymore. We are defending AI itself. And that requires governance as much as guardrails.

How Bitsight can help

As AI security tools become embedded into critical workflows, visibility becomes the first line of defense. You cannot govern what you cannot see.

One of the challenges organizations face today is not just bypass techniques, but uncontrolled deployment. AI-powered tools are often stood up quickly, integrated broadly, and connected to sensitive systems with little centralized oversight. Over time, this creates blind spots across the attack surface.

Bitsight helps organizations move from reactive patching to structural risk management by providing external visibility into exposed assets and emerging technologies across their environment. That includes identifying internet facing systems, misconfigurations, and new technology adoption that may expand the attack surface in unintended ways.

As we have seen with rapidly adopted tools such as AI agents and model driven platforms, exposed interfaces, weak authentication, and overpermissioned integrations can introduce risk long before a bypass technique is even required. Early detection of exposure patterns allows security teams to address governance gaps before they become incidents. Beyond asset discovery, Bitsight’s attack surface intelligence and entity mapping capabilities help organizations understand where technology is deployed, which business units are associated with it, and how it connects to broader infrastructure. This supports clearer accountability and ownership, which are essential as AI becomes embedded into operational workflows.

AI security tools should not operate in the shadows of the environment. They should be subject to the same governance, monitoring, and risk evaluation as any other critical system.

The shift from whack-a-mole patching to structural control starts with visibility. Bitsight helps organizations understand where risk is emerging so they can apply governance before adversaries apply pressure.